Optimizing our design token system

An Academia case study • 2026

18 months after their inception, tokens had become central to our design system success. Furthermore, a variety of systemic issues was degrading the usefulness of tokens unless improvements could be implemented. I recruited engineering support to fix and release a new token update.

Impact of v1 token set

Our first version of tokens was introduced in 2024. Over the following 18 months, the token set successfully improved the consistency of design work across the Academia experience. We tightened up our brand expression and reduced unnecessary tokens. We also saw significant improvements in our designer <> developer hand-off process. This was evidenced by a reduction of changes happening during design QA.

Issues identified

Limited resources are dedicated to our design system. To date, we have only been prioritizing changes that were absolutely necessary.

In the time since first launch, our brand, organization, and engineering process saw lots of change.

Our brand was more defined than it had been when tokens were first introduced. These decisions have evolved our UI and typography styles.

After 18 months of regular use, I had made changes to support designer ease-of-use. This included tweaks to token names for better discoverability and adjustments to token values to better align with design decisions.

Company strategy has shifted to include more 0-1 work. This meant more non-components were being used in early-stage work.

Design tokens were increasingly valuable in an engineering process that had shifted to include more AI tools. Unfortunately, we are also dealing with the issue of invented variables. Using Claude Code’s documentation feature, we wanted to introduce guidance and rules to reduce hallucinations.

The plan

Auditing the current state of tokens

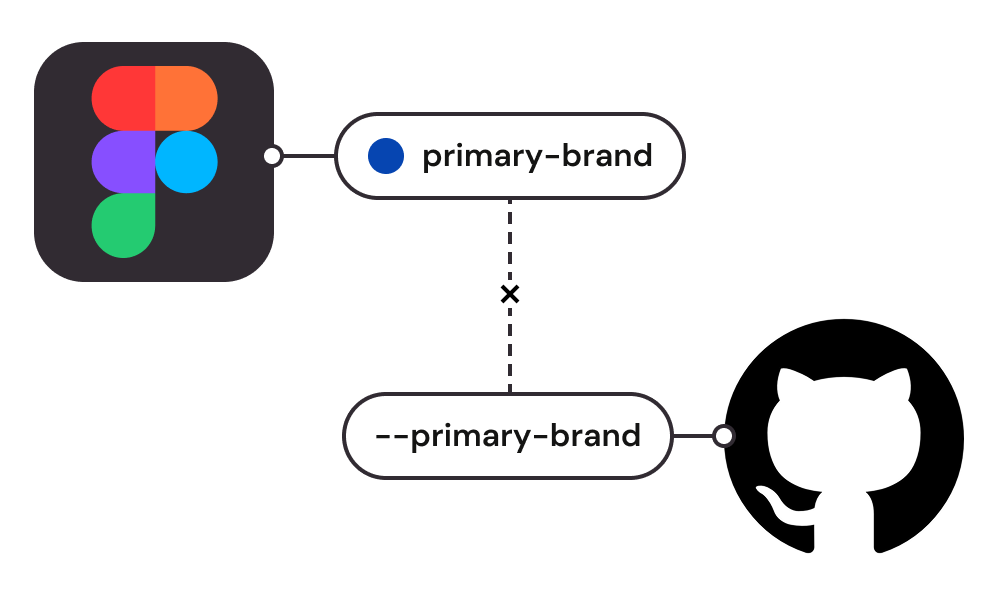

First, my engineering partner pulled all the existing tokens from our code base. We compared them to the current state of tokens in our Figma library file to understand what has changed, what is new, and what tokens are conflicting.

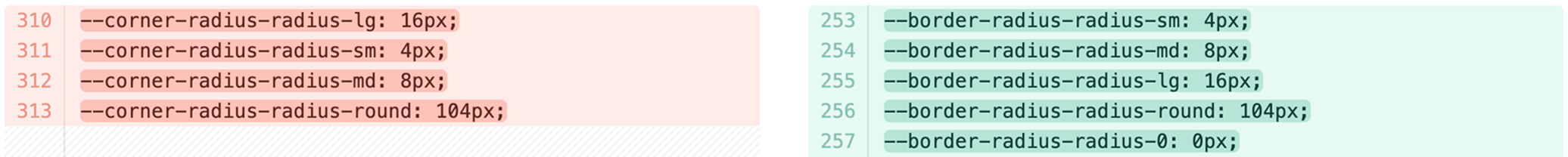

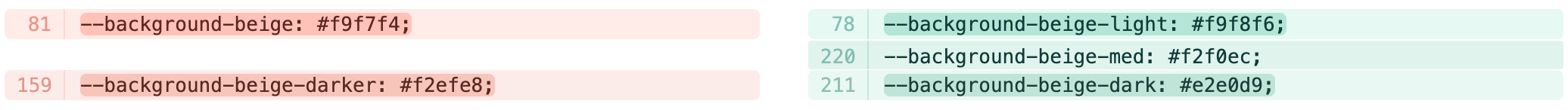

Some conflicts are purposeful, like:

Our naming change from ‘corner radius’ to ‘border radius’ to align with CSS language.

We needed more variation in our beige choices as background colors. Our beige palette has become our go-to supporting color.

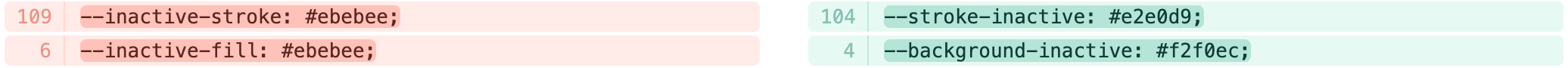

The interactive-based names for tokens were originally meant to be component-specific. After their use cases expanded, renaming them allowed for better discovery in Figma. Leading with the token type (‘background’, ‘stroke’) ensures that similar tokens are grouped in the Figma palette window.

Some conflicts deserve to be walked back:

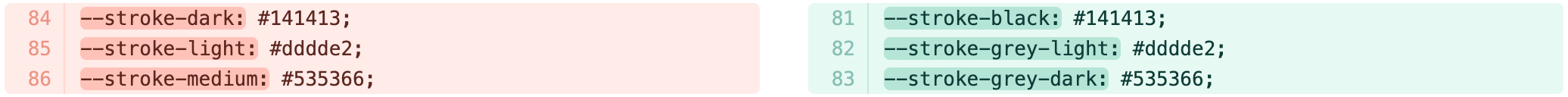

At some point I had updated the names of the stroke color options to be more precise. However, this wasn’t a change worth keeping and I reverted back to the original -light, -medium, and -dark convention.

The last category of changes were value only.

These were to be expected as a natural state of iteration.

Updating the source tokens

After I reconciled all conflicts, I updated the tokens in the Figma library.

Some changes needed additional mapping details. For a small subset of tokens we needed to map old tokens to the new naming convention so as not to break what was already in production.

This step was also an opportunity to identify and correct hallucinated tokens created by AI coding tools. All hallucinated tokens got re-mapped to their proper counterpart.

Setting up safeguards

Hallucinated tokens were cropping up in our codebase as the use of AI tools increased. In an effort to curb this, I worked with another engineer to introduce Claude Skill documentation to reduce AI’s output of invented variables. The skill guides Claude Code to discover and use existing DS components instead of building custom UI and to use CSS custom properties instead of hardcoded values.

Early signal shows an +108% improvement. With no Skill initiated, we see a 45% success rate. With the Skill, we’ve seen that increase to 94%.

Publish & QA

Finally, the new tokens were published, and we solicited engineering and design support to QA the entire experience to identify any mistakes.

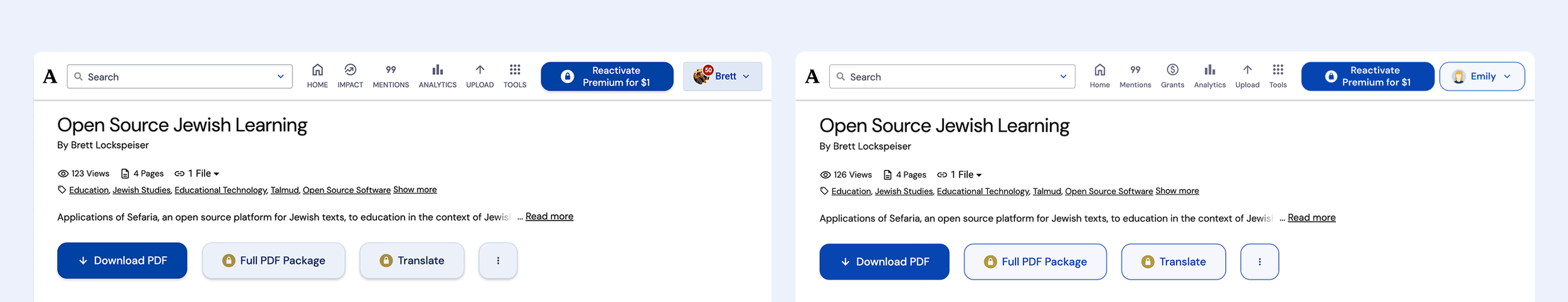

LISWP before token update

LISWP after the token update